Your team already proved the hard part. The agent works, the client likes the demo, and everyone wants it live. Then the deployment work starts, and that’s where many consulting firm client projects slow down. One client needs isolated credentials, another needs approval logs, another wants WhatsApp access, and your engineers end up rebuilding agent infrastructure instead of shipping outcomes.

That pressure is showing up across the market. McKinsey’s 2025 survey found 62% of companies are experimenting with AI agents, while scaling remains limited to 10% per function. In practice, that matches what consulting teams run into: pilots are easy, repeatable production hosting is not. If you’re comparing options, it helps to start with platforms built for secure rollout instead of stitching together cloud services by hand. If you also want a broader view of adjacent deployment stacks, this roundup of top model deployment solutions is a useful companion.

Table of Contents

- 1. Donely

- 2. Microsoft Copilot Studio

- 3. Amazon Bedrock AgentCore (Agents for Bedrock)

- 4. Google Cloud Gemini Enterprise Agent Platform (formerly Vertex AI Agent Builder)

- 5. Zapier Agents (Zapier Central)

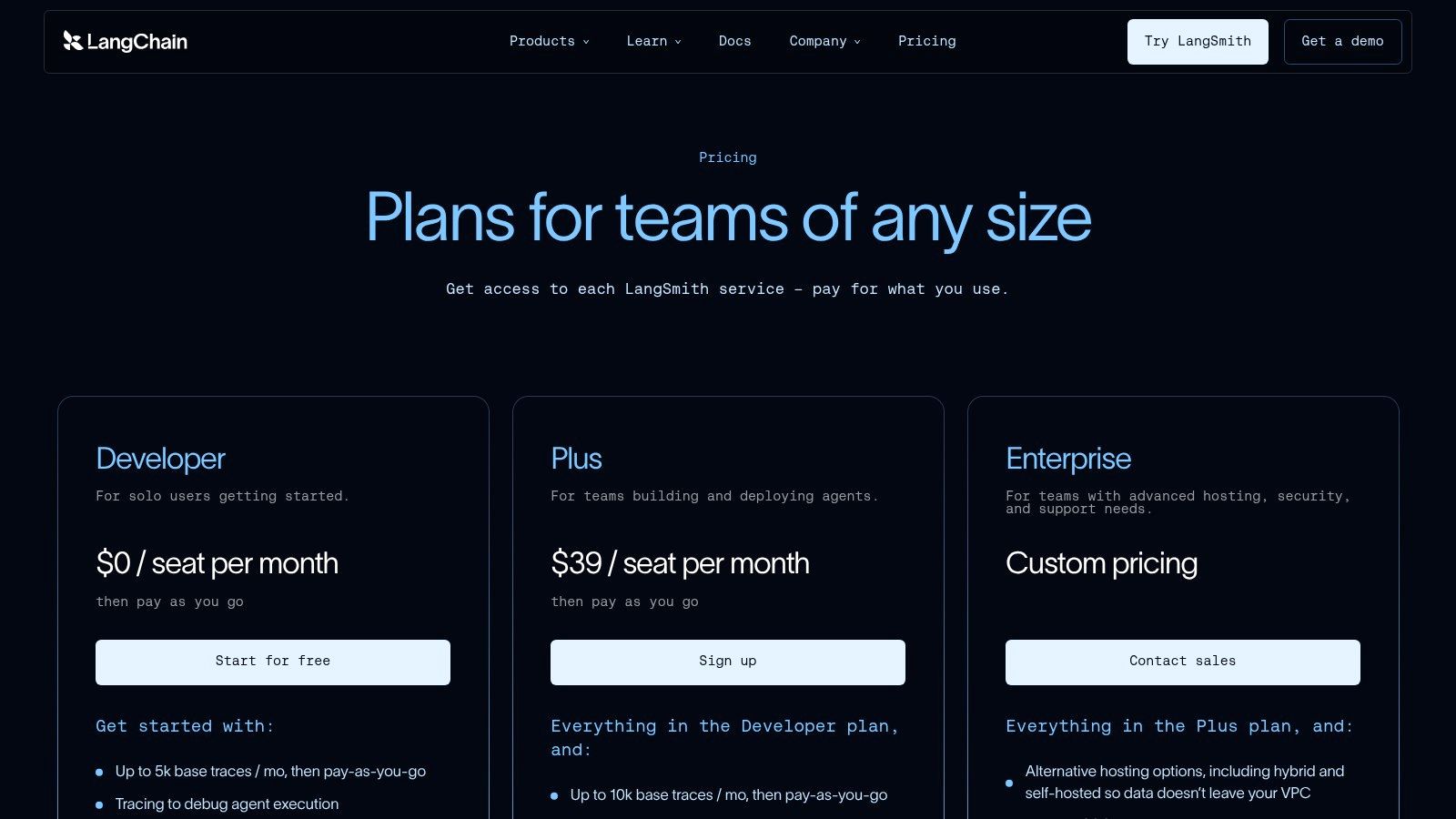

- 6. LangChain LangSmith (LangGraph) Deployment

- 7. LlamaIndex + LlamaCloud

- 8. Relevance AI (AI Workforce/Agents)

- 9. FlowiseAI Cloud

- 10. Dify.ai (Cloud and Self-Hosted)

- Top 10 AI Employee Agent Hosting Platforms, Feature Comparison

- From Evaluation to Deployment Your First Client Agent

1. Donely

Donely is the most opinionated option on this list for AI employee agent hosting, and that’s why it stands out for agencies and implementation partners. It’s built around a simple idea: every client, department, or use case should get its own isolated instance, but your team should still operate everything from one dashboard. That operating model matters more than flashy demos once you’re juggling multiple client environments.

Provisioning is a common first observation. Donely is designed so you can launch production-ready OpenClaw-powered agents in under 60 to 120 seconds, connect them to 850+ tools, and deploy to channels including WhatsApp, Telegram, Discord, and Slack without writing Dockerfiles or managing servers. For firms that need simplified hosting setup and fast deployment, that removes the usual handoff gap between “the agent works” and “the client can use it.”

Why consultants shortlist it first

The platform’s real differentiator is multi-instance architecture. Instead of creating separate accounts, separate VPS setups, or ad hoc cloud projects for each client, you run isolated instances side by side with per-instance RBAC, scoped data access, unified audit logs, centralized monitoring, and consolidated billing. Donely’s guide to AI employee hosting shows the same pattern clearly: one control plane, isolated agent environments, and a growth path from solo work to managed fleets.

That approach lines up with where the market is headed. A 2025 survey of 300 senior executives from PwC found 79% reported AI agents already adopted in their companies, and 88% planned AI budget increases in the next 12 months due to agentic AI. When consulting teams inherit that demand, they need managed hosting platform features that reduce repeat infrastructure work, not just a builder UI.

Practical rule: If each new client requires a fresh Terraform repo, custom SSH access, and separate billing tracking, your hosting model won’t scale with your services business.

Pricing is also unusually straightforward for agency math. There’s a free tier, Personal at $25 per month per instance, Team at $50 per month per instance, and Enterprise for unlimited instances with SSO, dedicated support, custom SLAs, HIPAA-ready architecture, and SOC 2 in progress. Automatic volume discounts apply as instance counts grow, which is the right pricing shape for firms expanding from one pilot to a portfolio of client agents.

Where the trade-offs show up

Donely is strongest when your delivery model values speed, isolation, and operational consistency over deep infrastructure customization. If your team wants total cloud-level control over every runtime component, a hyperscaler stack may fit better. And if you’re in a tightly regulated environment, you’ll want to validate every compliance requirement directly with the vendor rather than assuming “HIPAA-ready” covers your exact deployment model.

Still, for consulting firm client projects, the fit is obvious.

- Best for fast onboarding: New client instances can be created quickly without separate cloud setup.

- Best for governed multi-tenancy: Per-instance RBAC and audit logs are built into the operating model.

- Best for channel-ready launches: WhatsApp, Slack, Telegram, and Discord support reduce last-mile deployment work.

- Watch the pricing model: Per-instance costs are easy to understand, but large fleets still need cost modeling.

2. Microsoft Copilot Studio

Microsoft Copilot Studio makes the most sense when the client already runs on Microsoft 365, Azure, Power Platform, and Dataverse. In those environments, the biggest advantage isn’t novelty. It’s policy alignment. Security teams already know the admin surfaces, procurement already knows the vendor, and internal users already work in Teams and Outlook.

For consulting teams, that lowers the political friction of deployment. You’re not asking a client to bless a new stack from scratch. You’re extending one they already trust.

Best when the client already lives in Microsoft

Copilot Studio supports autonomous agents, multi-agent patterns, publishing into Microsoft surfaces, and tenant-level governance. If the project depends on M365 data, internal workflows, and centrally managed identity, that integration can save a lot of implementation effort compared with a standalone platform. Donely’s overview of AI employee platforms is a helpful contrast point here, because it highlights how different the buying motion is between a dedicated agent host and a broader enterprise productivity ecosystem.

The trade-off is complexity at the edge. Smaller consulting projects can get bogged down by Azure prerequisites, Copilot Credits, and governance layers that are perfectly normal for enterprise IT but heavy for fast-moving prototypes. It’s a good fit for standardization, not for minimal setup.

Microsoft is often the easiest sale inside a Microsoft-first client, even when it isn’t the simplest product operationally.

Use it when the client wants internal productivity agents, tenant-wide controls, and native alignment with existing Microsoft operations. Skip it when you need a lightweight managed hosting platform for spinning up many isolated client agents across different organizations.

3. Amazon Bedrock AgentCore (Agents for Bedrock)

Amazon Bedrock AgentCore is for teams that want serious cloud primitives behind their agent infrastructure. It gives you modular runtime services, policy layers, identity controls, observability, managed memory, and secure access patterns inside AWS. If your consulting practice already builds on AWS, that’s powerful.

It’s also not a “set it and forget it” product category. Bedrock gives you managed components, but your team still needs to think like cloud engineers.

Strong control if your team can handle AWS complexity

The biggest strength here is composability. You can shape the runtime, security model, and observability stack around client requirements instead of staying inside a tightly packaged product. That matters for enterprise delivery teams that need IAM integration, auditability, and alignment with existing AWS operations.

The downside is predictable. Pricing and operations can spread across multiple AWS meters and services, which makes it harder to estimate project margins for fixed-scope consulting work. A flexible stack can become an expensive one if nobody owns the usage model carefully.

A market report from DataM Intelligence projects the Enterprise AI Agent Adoption market at US$ 6.65 billion in 2025, growing to US$ 142.35 billion by 2035, with fewer than 25% of enterprises advanced to production deployment. Bedrock is one way to close that production gap, but it assumes your team can absorb the integration and governance work that packaged platforms try to hide.

- Choose Bedrock when: The client already operates securely in AWS and wants native cloud controls.

- Avoid it when: Your engagement depends on simplified hosting setup and very fast handoff to non-cloud operators.

4. Google Cloud Gemini Enterprise Agent Platform (formerly Vertex AI Agent Builder)

Google’s Gemini Enterprise Agent Platform is strongest when the client’s data stack already points toward BigQuery, Google security tooling, and broader Google Cloud MLOps. It combines low-code and code-first paths, model access through Model Garden, and enterprise governance features that suit larger programs.

That breadth is useful for consulting teams handling more than one agent pattern. Search assistants, internal ops copilots, and data-grounded task agents can all sit under the same cloud umbrella.

A fit for data-heavy Google Cloud environments

Google’s appeal is less about quick packaging and more about end-to-end platform alignment. If the client wants model choice, strong data connectivity, and formal enterprise controls, it’s a credible option. It also gives technical teams room to move from low-code starts into more engineered implementations without switching ecosystems.

The challenge is practical buying friction. Pricing often requires calculator work or direct quotes, and the platform makes the most sense when the client is already invested in Google Cloud. For a consulting firm trying to standardize a repeatable delivery motion across many clients, that can limit portability.

Use Google here when the project’s center of gravity is data and cloud governance. If the primary problem is onboarding many separate client agents quickly with isolated administration, a more specialized AI employee agent hosting product will usually be easier to operationalize.

5. Zapier Agents (Zapier Central)

Zapier Agents is the fastest path on this list from idea to working automation, especially when the client’s needs are operational rather than highly custom. Sales follow-up, inbox triage, lead qualification, and support routing are natural fits. A consulting team can often stand something up quickly because the integration catalog is already the product.

That makes Zapier attractive for short project windows and lower-complexity service packages.

Fast deployment wins small project windows

The platform’s no-code posture is its biggest strength. Access to a huge app ecosystem, live data sources, browsing, and organization sharing means non-engineering stakeholders can stay involved after launch. For some firms, that’s the difference between a sellable managed service and a one-off technical build. If you’re weighing that style of delivery against a more opinionated host, this piece on a no-code AI agent builder is a useful reference.

The trade-off is depth. Activity quotas and abstraction layers can become painful once agents need longer-running logic, stricter governance, or richer runtime behavior. You can get to value quickly, but you may outgrow the model when the client wants more than app-to-app coordination.

McKinsey estimates AI agents could generate between $2.6 trillion and $4.4 trillion in annual value through use cases such as multi-agent orchestration and governance. Zapier is a good entry point into that value for process-heavy teams, but it isn’t the platform I’d choose first for high-control, multi-client hosting operations.

6. LangChain LangSmith (LangGraph) Deployment

LangSmith deployment for LangGraph agents is a strong pick when your team already writes agents in LangChain or LangGraph and cares about traces, evaluations, and runtime visibility. It feels like an engineer’s platform because it is one.

That’s a good thing when your consulting engagements depend on proving why an agent did what it did. It’s less helpful when the client just wants a managed service with minimal technical overhead.

Best for teams already committed to LangGraph

LangSmith shines in observability. Long-running flows, step-by-step execution, scheduling, and hybrid hosting options give technical teams the ability to debug stateful behavior in a way many business-first platforms don’t. For client work involving custom logic, tool chaining, and ongoing evaluation, that matters a lot.

The constraint is ecosystem fit. If your stack isn’t already centered on LangGraph, this can feel like adopting both a framework and a hosting pattern at once. Billing models tied to runs and uptime can also take time for consulting teams to price confidently.

If your delivery team debugs agents by reading traces every day, LangSmith is a serious contender. If your account team needs unified client billing and low-touch operations, it may feel too close to the metal.

7. LlamaIndex + LlamaCloud

Some client projects aren’t really “agent projects” first. They’re data access projects with an agent interface attached. That’s where LlamaIndex and LlamaCloud tend to fit best. If the core problem is parsing messy documents, building indexes, and orchestrating retrieval-heavy workflows, this stack is worth serious attention.

It’s particularly effective for consultancies delivering document-centric assistants in legal ops, internal knowledge, support archives, and research-heavy environments.

Useful for document-heavy client work

LlamaParse and the broader LlamaIndex ecosystem give developers strong building blocks for extracting value from unstructured content. The managed cloud services help, but this still feels more engineering-led than platform-led. You’re getting tools for data agents and microservice-style deployment, not a fully packaged multi-client hosting console.

That distinction matters. If your team has developers who can shape the architecture, LlamaIndex is flexible and capable. If your operating model depends on PMs or ops leads launching new client agents without engineering involvement, it’s less ideal.

The AI4SP analysis notes that organizations report better results when they treat agents as onboarded team members rather than tools, and it also highlights a gap in multi-instance isolation and governance guidance. LlamaIndex is excellent on the data side. It’s not the platform I’d pick first to solve that governance gap for a consulting portfolio.

8. Relevance AI (AI Workforce/Agents)

Relevance AI is packaged for business teams more than developer teams, and that changes the deployment experience in a good way. The visual canvas, templates, scheduling, analytics, and prebuilt GTM workflows make it easier to hand over an agent program to client-side operators.

That can be very attractive for consulting firms selling outcomes to revenue, support, and operations leaders rather than engineering departments.

Business teams can run with it quickly

The product is well suited to use cases where agents need to perform visible business work across tools and channels. Templated workflows reduce design time, and analytics help clients understand how the system is being used after launch. If your firm offers managed optimization after deployment, that packaging helps.

There’s still a trade-off. Credit and action-based pricing takes some effort to model, and stronger governance controls tend to sit higher in the product stack. For enterprise accounts that care about isolation, access boundaries, and audit discipline from day one, you’ll want to validate whether the governance model matches your service promises.

A DataM Intelligence market summary says more than 90% of enterprises are adopting AI agent solutions, but fewer than 25% have reached production deployment. Relevance AI can help business teams cross that gap faster than custom development, especially when the priority is packaged execution rather than bespoke infrastructure.

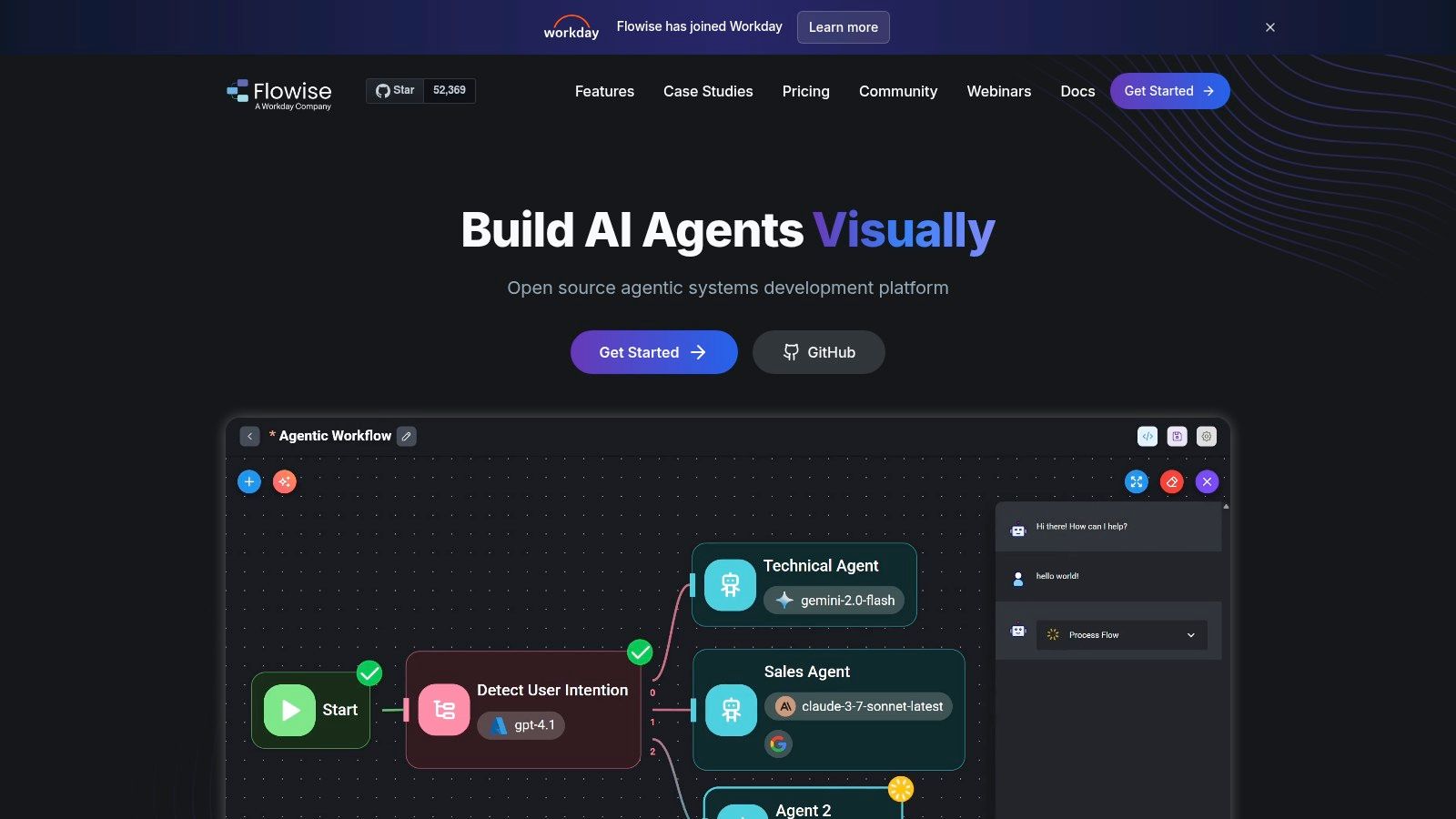

9. FlowiseAI Cloud

FlowiseAI Cloud has become a common pick for teams that want a visible workflow builder, an embeddable UI, and an easier path from prototype to hosted app. It’s approachable, flexible, and easier to explain to clients than many code-first stacks.

That makes it useful for startups and boutique consultancies doing lightweight agent delivery.

Good for lightweight builds and visible workflows

The platform gives teams a visual way to build and host flows, then package them into chat experiences with branding controls. If the client wants to see the logic and iterate collaboratively, Flowise is easier to work through than a more hidden runtime. Self-hosting remains an option when you need more control later.

The main limit is governance depth. Compared with hyperscaler stacks or products built around multi-instance administration, Flowise is lighter on the operational layer. Prediction-based quotas can also become a planning issue when clients move from casual usage to sustained production traffic.

One useful market signal here is that 31% of companies now automate processes via agents, particularly in sales and customer replies. Flowise can support that kind of rollout, especially when speed and visibility matter more than heavy-duty administrative control.

10. Dify.ai (Cloud and Self-Hosted)

Dify sits in a useful middle ground. It gives you an open-source foundation, a visual workflow layer, RAG support, model management, observability, and cloud or self-hosted deployment options. For consulting teams that want flexibility without starting from bare infrastructure, that balance is appealing.

It’s especially good when your clients vary widely. Some want managed convenience, others want code ownership and deployment control.

Open-source flexibility with a managed path

Dify’s strength is optionality. You can prototype in the cloud, use templates and visual workflows, then move into more controlled hosting patterns if the client needs it. That’s often a practical consulting advantage because not every buyer knows on day one how much control they’ll want later.

The weakness is that optionality can shift complexity back to your team. Complex stateful agents and advanced multi-agent behavior still need engineering effort, especially if the client expects production-grade rigor. You’re getting a flexible platform, not a magic simplifier.

For firms that need a configurable foundation and don’t mind doing some assembly, Dify is a solid choice. For teams optimizing around the fastest possible managed rollout with strong built-in isolation patterns, a more purpose-built AI employee agent hosting platform will usually reduce operational drag.

Top 10 AI Employee Agent Hosting Platforms, Feature Comparison

| Platform | Core features ✨ | UX & Security ★ | Pricing & Value 💰 | Target Audience 👥 | Unique Selling Points ✨ |

|---|---|---|---|---|---|

| 🏆 Donely | Click-to-deploy OpenClaw agents; 850+ integrations; multi-instance isolated containers | ★★★★★, 60–120s deploy, unified audit logs, HIPAA-ready, 99.9% SLA (Team+) | 💰 Free forever; Personal $25/mo per instance; Team $50; volume discounts (10–30%) | 👥 Founders, agencies, startups, ops, compliance teams | ✨ Multi-instance isolation + per-instance RBAC, unified billing, zero-DevOps |

| Microsoft Copilot Studio | Agent builder + MS365/Power Platform connectors; publish to Teams/Outlook | ★★★★, tenant governance, analytics, Copilot Credits metering | 💰 Copilot Credits + Azure costs; enterprise licensing model | 👥 MS365/Azure-enterprises, IT admins | ✨ Deep MS365 integration & tenant-level controls |

| Amazon Bedrock AgentCore | Serverless agent runtime, managed memory, policy & observability components | ★★★★, enterprise IAM, CloudWatch observability, fine-grained controls | 💰 Per-second CPU/memory billing; usage-based (complex meters) | 👥 AWS-centric engineering & infra teams | ✨ Modular runtime with fine-grained cost & security controls |

| Google Cloud Gemini Enterprise Agent Platform | Low-code Agent Studio + SDK, Model Garden access, MLOps integrations | ★★★★, strong data/MLOps stack, enterprise governance | 💰 Model + infra costs via GCP pricing; often quote-based | 👥 GCP/data/ML teams and enterprises | ✨ Gemini models, deep BigQuery & MLOps integrations |

| Zapier Agents (Zapier Central) | No-code agents + 8,000+ app integrations; live data & MCP tool access | ★★★, fastest setup; activity quotas limit heavy flows | 💰 Subscription + activity quotas; great for small teams | 👥 Non-technical teams: ops, sales, support | ✨ Massive integration library; no-code speed to production |

| LangChain LangSmith (LangGraph) | 1‑click deploys for LangGraph agents, tracing, MCP tool access, hybrid hosting | ★★★★, excellent tracing/evaluation; per-run meters | 💰 Per-run/uptime meters; developer-focused billing | 👥 LangChain dev teams & ML engineers | ✨ Purpose-built tracing, evaluations, hybrid/self-host options |

| LlamaIndex + LlamaCloud | RAG/document parsing, managed indexes, microservice agent deployments | ★★★, strong document/RAG UX; engineering-led | 💰 Parsing/indexing credits; not turnkey hosting | 👥 Data-heavy teams, RAG specialists, devs | ✨ Best-in-class parsing (LlamaParse) + OSS ecosystem |

| Relevance AI (Agents) | Visual multi-agent canvas, templates, 2,000+ integrations, A/B testing | ★★★, business-friendly UI; action/credit quotas | 💰 Actions/credit model; BYO-LLM on paid tiers | 👥 GTM, ops, support teams | ✨ Prebuilt templates, scheduling & analytics for business workflows |

| FlowiseAI Cloud | Visual flow builder, embeddable widget, managed hosting / self-host | ★★★, rapid prototyping; lighter governance | 💰 Affordable entry tiers; prediction-based quotas | 👥 Startups, prototypers, small teams | ✨ Fast setup + embeddable UI for quick launches |

| Dify.ai (Cloud & Self-Hosted) | OSS visual builder, RAG pipelines, agent runtime, API publishing | ★★★, flexible OSS; enterprise setup may require engineering | 💰 Cloud + self-host options; pricing varies | 👥 Teams wanting OSS flexibility & control | ✨ Open-source DNA + hybrid/self-host deployment options |

From Evaluation to Deployment Your First Client Agent

The best platform isn’t the one with the longest feature page. It’s the one that matches how your consulting team delivers work. If your model depends on rapid onboarding, repeatable environments, and clear client boundaries, prioritize fast deployment, isolation, auditability, and billing controls first. Those are the features that keep projects profitable after the demo.

The broader market is moving quickly, but production discipline still lags. Gartner forecasts 40% of enterprise applications will embed task-specific AI agents by 2026, up from less than 5% in 2025. That doesn’t mean every consulting team should race into custom infrastructure. It means buyers will expect agent delivery to feel normal, dependable, and governed.

A practical first move is to pilot with one client and one bounded workflow. Pick a use case with visible operational value, but contained risk. First-line support, lead handling, internal research assistance, or interview coordination are good candidates because teams can measure outcomes without redesigning the whole business. The adoption data supports that focus too. In one market summary, first-line support deployments were reported across a meaningful share of mid-to-large enterprises, with a substantial portion of inquiries handled autonomously and lower time-to-resolution and support costs once agents reached production (DataM Intelligence market report).

From there, evaluate the platform through the lens of service delivery, not feature novelty.

- Provisioning speed: Can your team launch a new client environment in minutes instead of opening an infrastructure workstream?

- Isolation model: Does each client get clean data boundaries, or are you faking multi-tenancy with naming conventions and good intentions?

- Governance: Can project leads answer who accessed what, changed what, and connected which tools?

- Channel readiness: If the client asks for WhatsApp, Slack, or another frontline channel, can you support it without bolting on another stack?

- Commercial fit: Can you explain billing cleanly to the client and protect your own margins as usage grows?

The production bottleneck usually isn’t model quality. It’s operational discipline around deployment, access, and ownership.

If your team wants to build momentum, don’t over-architect the first rollout. Stand up a narrow pilot, prove value, and use that engagement to standardize your hosting pattern for the next client. That’s how consulting firms turn AI employee agent hosting from a custom engineering task into a repeatable service line. For teams thinking about adjacent workflows and research-heavy implementations, this guide to creating AI web research agents is a useful next read.

If your firm wants one platform that’s built around fast client onboarding, isolated instances, unified monitoring, and channel-ready deployment, Donely is the cleanest place to start. It’s especially well suited to agencies and implementation partners that need to launch OpenClaw-powered AI employees quickly, keep client environments separate, and scale without rebuilding the same infrastructure for every project.