You're probably not comparing OpenAI Superapp vs OpenClaw because you want another feature checklist. You're comparing them because a single AI assistant has turned into several. One for internal ops. One for sales. One for support. Maybe separate agents for each client account, business unit, or regulated workflow.

That's where most writeups stop being useful. They compare prompts, plugins, or model quality, then skip the harder question: how do you run multiple isolated AI agents without turning governance, billing, and data boundaries into a mess?

The practical answer starts with architecture, not demos. If you expect AI agents to stay small and developer-owned, a managed ecosystem can be the cleanest path. If you expect them to spread across teams, clients, and channels, the deployment model matters more than the landing page.

Table of Contents

- Choosing Your AI Workforce Beyond the Hype

- Understanding the Competing Philosophies

- Architecture and Deployment Models Compared

- Core Capabilities and Integration Ecosystems

- Governance Security and Enterprise Readiness

- Pricing Models and Total Cost of Ownership

- Final Verdict and Who Should Use Which

Choosing Your AI Workforce Beyond the Hype

A pilot goes live in one business unit and looks manageable. Three months later, five departments want their own agents, security wants isolated credentials, legal wants audit trails, and operations is stuck figuring out who owns the runtime, the policies, and the failure modes.

That is the fundamental decision behind openai superapp vs openclaw. A polished demo can hide the harder question. How do you run many isolated AI agents across teams, clients, and regulated workflows without creating credential sprawl, weak tenancy boundaries, and an approval process nobody can enforce?

The first agent proves the concept. The fifth exposes policy gaps. By the time a company is running agents for multiple departments or customer accounts, the work shifts from prompt quality to platform operations. Separate memory, scoped tool access, role-based permissions, logging, human approvals, and environment isolation stop being nice-to-have features and become day-one requirements.

The comparison most buyers need

If you're evaluating openai superapp vs openclaw, usability is only one layer of the decision.

The harder questions are operational:

- Isolation: Can one team's or client's agent access another agent's files, history, tools, or runtime context?

- Control: Who can approve actions, rotate secrets, limit permissions, and disable risky workflows?

- Scalability: Can platform teams provision and manage many agents through a repeatable model, or does each deployment become its own project?

- Compliance: Can security and audit teams inspect data handling, access paths, logs, and policy enforcement without reverse-engineering the system?

Practical rule: If the rollout will span more than one team, one client, or one regulated process, choose the platform with the cleaner control plane, not the better demo.

This is why the comparison matters beyond features. OpenAI Superapp and OpenClaw represent different operating models, and the operating model determines who carries the burden as adoption grows. One reduces infrastructure decisions by keeping more of the stack inside the vendor boundary. The other gives teams more control over deployment and tenancy design, but also more responsibility for getting governance and scale right.

Understanding the Competing Philosophies

A platform team rolling out agents across finance, support, and operations is not choosing between two feature lists. It is choosing who owns the control plane, who absorbs integration drift, and who gets paged when one tenant's workflow breaks another's.

OpenAI Superapp and OpenClaw start from different assumptions about that job.

OpenAI Superapp as a managed operating model

OpenAI Superapp follows a managed-product philosophy. The vendor defines the runtime, the tool boundaries, the execution model, and much of the surrounding user experience. That makes adoption faster for teams that want a governed application more than a framework.

The practical upside is consistency. Security reviews are narrower because there are fewer moving parts under customer control. Platform teams do not need to standardize their own agent runtime before they can ship value. For coding support, internal assistants, and controlled workflows inside a vendor-hosted environment, that reduction in design choices can lower failure rates.

The trade-off is architectural freedom. Enterprises with strict tenant isolation rules, custom approval paths, private network dependencies, or provider-level routing policies eventually hit the edge of what a managed product is designed to expose. At that point, the question stops being "Does it have the feature?" and becomes "Can we enforce our operating model without fighting the product?"

OpenClaw as an operator framework

OpenClaw follows a framework philosophy. It gives the customer more say over runtime placement, model selection, tool wiring, and execution context. That is attractive for organizations building agent fleets across business units, clients, or regulated environments where one standard hosted experience is not enough.

That freedom is useful, but it is not free.

Someone has to define tenancy boundaries, package repeatable deployments, manage secrets, patch dependencies, monitor browser and OS automation, and decide how much autonomy any given agent should have. Teams evaluating self-hosting usually learn this quickly during setup. A guide to installing OpenClaw on a VPS makes the point clearly. Getting the software running is the easy part. Operating it safely for multiple isolated users is the main project.

This difference matters because enterprise agent programs rarely stay single-player. One assistant becomes ten. Ten become a shared service. Then legal asks for audit trails, security asks for permission boundaries, and business units ask for different tools, models, and retention rules.

| Philosophy | OpenAI Superapp | OpenClaw |

|---|---|---|

| Product shape | Managed application | Open framework |

| Control model | Vendor-defined defaults | Customer-defined policies and runtime choices |

| Operational burden | Lower upfront for customer teams | Higher unless the team builds a strong platform layer |

| Multi-tenant fit | Better when shared controls are acceptable | Better when each team or client needs different isolation and execution rules |

| Best fit | Teams optimizing for speed and reduced infrastructure ownership | Teams optimizing for control, customization, and deployment flexibility |

The primary dividing line is governance depth. OpenAI Superapp reduces the number of decisions your team must make. OpenClaw lets you make far more of them. Enterprises succeed with the first model when standardized controls are enough. They succeed with the second when they have the engineering discipline to turn flexibility into a repeatable operating model.

Architecture and Deployment Models Compared

A pilot agent rarely stays a pilot. The hard part starts when three business units each want their own agent, their own tool access, and their own retention policy. Architecture decides whether that request becomes a quick configuration change or a platform program.

Early comparison table

| Deployment factor | OpenAI Superapp | OpenClaw |

|---|---|---|

| Runtime location | OpenAI cloud | Local or self-managed environment |

| Data persistence | On OpenAI servers | Local DuckDB storage |

| Offline behavior | Cloud-dependent | Can support offline capability |

| OS and browser control | More constrained and platform-defined | Full OS and browser control |

| Ops burden | Low for the customer | High unless abstracted by managed hosting |

| Sovereignty posture | Vendor-hosted | Customer-controlled |

Runtime location shapes every operational decision

The practical split is straightforward. OpenAI Superapp runs inside the vendor environment. OpenClaw can run on infrastructure you control, close to your data, browser sessions, and internal systems.

That sounds like a hosting choice. In practice, it changes who owns patching, secrets distribution, audit collection, backup design, tenant isolation, and failure recovery.

Managed deployment reduces the number of platform decisions your team has to make. That helps when the goal is fast rollout across internal teams with shared controls. The cost is standardization. If one group needs custom browser automation, another needs local execution, and a third needs a separate storage boundary, you are working inside the vendor's model.

OpenClaw gives platform teams more room to shape the runtime. They can place agents on dedicated hosts, restrict network paths, choose storage patterns, and separate tenants more aggressively. They also inherit the work that comes with those choices. The moment an enterprise needs multiple isolated agents, self-hosting stops being a simple app install and starts looking like platform engineering.

A practical starting point is this OpenClaw VPS installation walkthrough. It shows the first layer of the job. The larger effort is everything that follows after the process is running.

Multi-tenancy is where the real complexity shows up

One agent on one machine is easy to reason about. Ten agents serving different teams or clients are not.

Now the design questions become operational. Do tenants share a runtime or get dedicated workers? Where are prompts, session history, and tool credentials stored? How do you stop one tenant's browser session, vector data, or logs from leaking into another tenant's boundary? Who can approve tool changes, model changes, or retention exceptions?

OpenAI Superapp removes part of that burden because the vendor already defines much of the control plane. For organizations that can accept common guardrails, that is a real advantage. Procurement is simpler. Operations are more predictable. Central teams spend less time building the plumbing.

OpenClaw is stronger when isolation rules differ by business unit, region, or customer contract. But that strength only shows up if the team builds repeatable controls around it. In production, that usually means separate execution pools, secret scopes per tenant, policy-based routing, audit pipelines, and a versioned release process for prompts and tools.

One agent can be a product feature. Many isolated agents become an internal service platform.

That distinction affects staffing. Managed products fit smaller platform teams because much of the runtime management stays with the vendor. Open frameworks fit organizations that already know how to run shared services with SSO, observability, CI/CD, policy enforcement, and incident response.

A short demo helps illustrate the architectural contrast in practice:

The decision usually comes down to operating model. Choose OpenAI Superapp if standardized controls and lower infrastructure ownership matter more than deep runtime customization. Choose OpenClaw if the business needs isolated execution patterns, custom governance, or deployment flexibility that a managed product cannot expose.

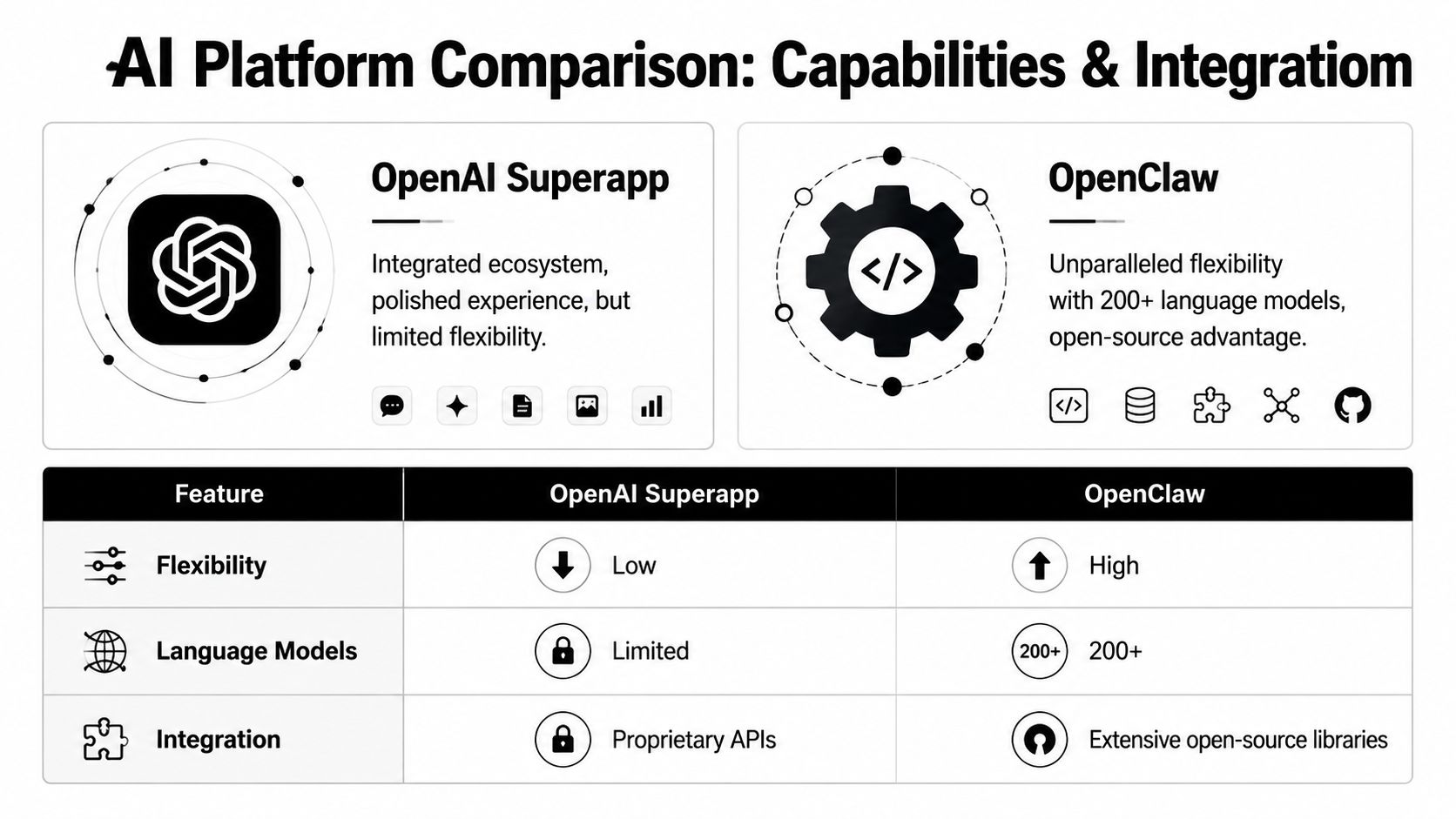

Core Capabilities and Integration Ecosystems

Capabilities start to matter after the platform is deployed, isolated, and connected to the systems the business already uses. In practice, this section is less about feature checklists and more about what your team can run across many agents without creating an integration mess.

Model choice changes operating flexibility

The first operational split is model portability. OpenAI Superapp keeps you inside a managed model stack. OpenClaw is built for teams that want to route workloads across different model providers, including hosted and local options.

That choice affects day-two operations more than the initial rollout.

A multi-agent environment usually needs more than raw model quality. It needs routing rules, fallback behavior, cost controls, and a way to assign the right model to the right tenant or workflow. A finance review agent may need a different provider and logging posture than an internal research bot. A customer-facing support agent may need a cheaper model for routine triage and a stronger one only for escalations.

OpenClaw fits that pattern better because provider choice is part of the framework design. Teams can build orchestration flows where one agent plans, another extracts data, and a third handles enrichment or verification. For organizations evaluating broader enterprise patterns, this OpenClaw enterprise deployment analysis gives useful context on how that model choice connects to production architecture.

OpenAI Superapp is simpler when the company already accepts OpenAI as the default vendor and wants fewer moving parts. That simplicity has value. It reduces integration variance, shortens testing paths, and makes support easier for smaller platform teams.

Tools and channels determine where agents can actually work

Integration breadth matters because enterprise agents rarely live in one interface. They show up in Slack, email workflows, ticket queues, internal portals, and approval systems. If the platform cannot reach those channels cleanly, the agent stays stuck in a demo environment.

OpenClaw is oriented toward broad orchestration. It is commonly used with workplace and messaging channels, custom tools, and external systems that sit outside a single vendor cloud. That makes it a better fit for teams building task workers that operate across departments or customer environments.

OpenAI Superapp is more opinionated. The ecosystem is tighter and easier to standardize, which helps when the goal is a managed assistant experience inside one primary environment. The trade-off is narrower portability. If a business unit later asks for the same agent pattern inside another channel, or under a different provider policy, the path is less flexible.

Security teams should care about this too. Every added connector increases review scope, token handling requirements, and failure modes. The AuditYour.App guide to AI security is a useful reference for testing agent integrations before they get broad access to business systems.

The ecosystem question is really a scaling question

A single agent can tolerate manual integration work. Fifty isolated agents cannot.

That is where the comparison gets more practical. OpenClaw gives teams a wider surface for tools, channels, and orchestration patterns, which is useful when each tenant or department needs a slightly different toolchain. OpenAI Superapp gives teams a more controlled ecosystem, which reduces variation and makes standardized deployments easier to support.

The trade-off is clear:

| Capability area | OpenAI Superapp | OpenClaw |

|---|---|---|

| Primary orientation | Managed assistant experience in a vendor-controlled environment | Cross-system orchestration and custom agent workflows |

| Model portability | OpenAI-centered | Multi-provider and local-model friendly |

| Parallel task design | More constrained from the buyer side | Better suited to orchestrated worker patterns |

| Channel reach | More app-centric | Better aligned with messaging, workplace, and custom channels |

For enterprises running many isolated agents, the better ecosystem is usually the one that matches the operating model already in place. Choose OpenAI Superapp if standardization matters more than extensibility. Choose OpenClaw if different teams, tenants, or regulated workloads need different tools, models, and execution paths.

Governance Security and Enterprise Readiness

A pilot agent usually passes security review. The problems start when the same company needs separate agents for HR, finance, support, and three external clients, each with different data boundaries, approval rules, and audit requirements.

Governance fails at the tenancy layer first

This is the part many openai superapp vs openclaw comparisons skip. The essential question is not whether one agent can call tools safely. It is whether fifty agents can run in parallel without sharing secrets, logs, memory, or approval paths across the wrong boundary.

That requirement changes the evaluation.

OpenAI Superapp usually gives enterprise buyers a cleaner starting point for centralized policy, vendor-managed controls, and standardized administration. OpenClaw gives infrastructure teams more freedom to define isolation, model routing, hosting location, and workflow behavior. That freedom matters in regulated environments, but it also shifts more responsibility onto the team operating the platform.

In practice, governance for multi-instance deployments comes down to a short list of design decisions:

- Secret isolation: each tenant or department needs separate credentials, rotation policy, and storage scope

- Data boundaries: prompts, uploaded files, memory, and action results need clear separation by workspace or instance

- Role control: admins, builders, reviewers, and auditors should not all have the same permissions

- Approval policy: high-risk actions need human review, not just tool access

- Audit coverage: security teams need logs that support investigations without exposing unrelated tenant data

These are architecture questions, not product marketing questions.

Auditability helps only if operations can enforce it

OpenClaw has a real advantage for organizations that need to inspect the stack, choose where workloads run, and avoid being locked into one vendor boundary. That makes it easier to align the platform with internal control requirements, data residency rules, or customer-specific hosting terms.

But open code does not create safe operations by itself.

A self-managed framework still needs hardened deployment templates, repeatable policy enforcement, centralized logging, and a way to prove that one tenant cannot access another tenant's tools or artifacts. If those controls are inconsistent across environments, the audit trail looks clean right up until an incident.

Security teams usually ask a more practical set of questions than product teams expect. Where are secrets stored. Who can approve an outbound action. Can logs be retained per tenant. Can the platform support legal hold, incident review, and access recertification. Can you disable one compromised agent without affecting every other workload.

Those questions decide enterprise readiness.

For teams building that control layer, this AuditYour.App guide to AI security is a useful reference because it focuses on validation work such as testing agent integrations, action paths, and exposure points instead of treating AI security as a policy-only exercise.

If your evaluation centers on how to operate isolated agent fleets, this enterprise perspective on NemoClaw and OpenClaw is worth reviewing because it frames the problem around tenancy, deployment discipline, and governance overhead.

The trade-off is straightforward. OpenAI Superapp reduces control-plane work, but you accept more vendor-defined boundaries. OpenClaw gives you stronger customization and hosting control, but your team has to build and maintain the isolation, evidence, and operating model that enterprise governance requires.

Pricing Models and Total Cost of Ownership

A pilot with five agents often looks cheap. The bill changes once those agents are split across business units, client accounts, or regulated environments, each with its own models, storage rules, approval paths, and support expectations.

That is why list pricing rarely answers the actual question.

Cost starts with architecture, not the rate card

OpenClaw usually looks cheaper at first glance because the framework itself does not carry the same kind of platform fee as a managed product. Teams still pay for model inference, and they may shift those costs across providers or local inference if they have the hardware and operational discipline to run it well.

OpenAI Superapp is easier to forecast at the application layer because usage, storage, and vendor-managed operations sit in one commercial model. That simplicity has value, especially for teams that need to launch fast and do not want to staff a platform function around agent runtime operations.

The trade-off shows up once the deployment footprint grows. A self-managed stack gives procurement flexibility and hosting control, but it also creates work in cluster design, tenant isolation, upgrade testing, logging pipelines, secret rotation, and on-call support. In a multi-tenant environment, those are not edge concerns. They become part of the product you are operating.

What a real TCO review should include

I usually break the analysis into four cost buckets:

- Platform charges for the managed service, storage, and vendor features.

- Model spend across whichever LLM providers, embedding services, or local inference paths you use.

- Operating cost for infrastructure, observability, support, security reviews, and release management.

- Governance cost for tenant provisioning, access controls, audit evidence, policy enforcement, and exception handling.

That fourth bucket gets missed in lightweight comparisons. It matters most when one AI platform serves several internal teams or external customers. Every isolated workspace, retention policy, connector approval, and incident review path adds overhead. Managed platforms absorb more of that burden inside the product. Open frameworks push more of it onto your engineering and security teams.

A lightweight outside benchmark can still help frame the build-versus-buy discussion. This AI product planner costs reference is useful for estimating scope before a team commits to a self-managed agent platform that will need real operational ownership.

For smaller teams, managed systems often cost more per line item and less overall because they reduce engineering drag. For enterprises that already run shared platform services and need stricter control over tenant boundaries, OpenClaw can become more economical over time, but only if the organization is prepared to operate it as a governed internal platform rather than a weekend framework.

If you are comparing this category more broadly, this guide to the best AI agents for different operating models is a useful companion, especially when your shortlist includes both managed products and open frameworks.

Final Verdict and Who Should Use Which

The deciding factor in openai superapp vs openclaw is not model quality. It is whether your team can run the system you choose after one pilot becomes ten agents, then fifty, each with different users, data boundaries, and approval rules.

Choose OpenAI Superapp if you want a controlled service with fewer platform decisions to make. That usually fits a product team, engineering org, or internal enablement group that needs fast rollout, standard guardrails, and one place to manage access without building its own agent control plane. A VP of Engineering might put it this way: "I need teams shipping useful agents this quarter. I do not need another platform for SRE and security to operate."

Choose OpenClaw if your requirements start with isolation, policy control, or deployment flexibility. That is the better fit for a central platform team, a regulated enterprise, or an agency serving multiple clients where each tenant needs separate connectors, runtime policies, audit trails, and sometimes separate infrastructure. A CISO or platform lead would frame it differently: "We can support the operational load, but we cannot accept shared assumptions about data access, retention, or runtime location."

That distinction matters most in multi-tenant environments. The hard problem is not getting one agent to work. The hard problem is running many agents with clear tenant boundaries, repeatable provisioning, cost visibility, and incident handling that does not turn into manual coordination across teams. If you are comparing adjacent options, this guide to the best AI agents for different operating models helps place managed products and open frameworks in the right bucket.

Decision Checklist

| Consideration | Choose OpenAI Superapp If… | Choose OpenClaw If… |

|---|---|---|

| Primary use case | Your center of gravity is coding and developer workflows | Your agents need broader business automation |

| Hosting preference | You want vendor-managed cloud simplicity | You need more control over runtime and data location |

| Governance model | Your team prefers standardized vendor boundaries | Your team can design or requires stronger isolation patterns |

| Model strategy | You're comfortable staying inside one ecosystem | You want portability across multiple model providers |

| Ops capacity | You don't want to build internal agent infrastructure | You have platform capability or a reason to invest in it |

| Scale pattern | You expect a smaller, tighter deployment footprint | You expect many distinct agents across teams or clients |

A practical rule helps. If your biggest risk is slow delivery, choose the managed product. If your biggest risk is weak tenant isolation, policy drift, or loss of deployment control, choose the framework and treat it like an internal platform from day one.

If you want OpenClaw's flexibility without turning multi-instance hosting, isolation, monitoring, and billing into an internal platform project, Donely gives teams a way to deploy and manage OpenClaw-powered AI employees from one dashboard. It is built for the messy middle where one agent turns into many, and governance matters as much as capability.